Examining Artificial Intelligence: A Look at Potential Risks

In today’s world, it is difficult to escape the influence of artificial intelligence (AI). From smart recommendations on our phones to autonomous driving systems, AI is deeply embedded in our daily lives. While we enjoy the conveniences it brings, we often forget that our preferences, judgments, and emotions are subtly influenced by algorithms.

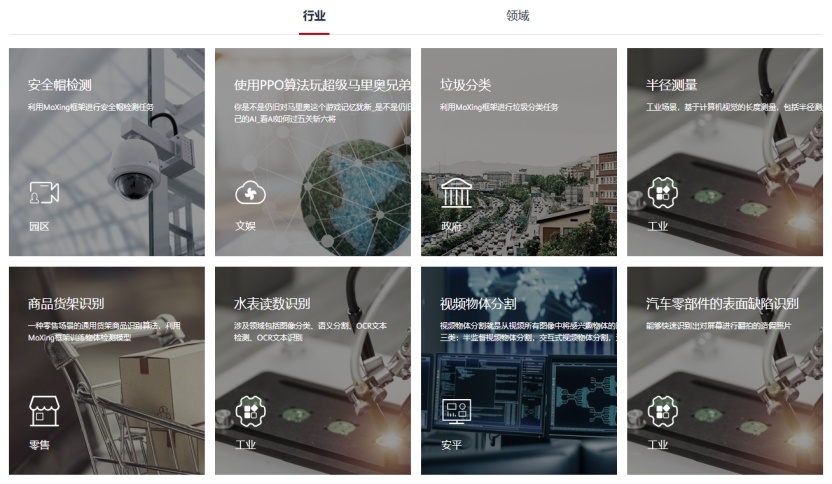

AI is not just an industry or a single product; it is an enabler across nearly all fields, including research, education, manufacturing, logistics, defense, law enforcement, politics, advertising, art, and culture. This enabler is characterized by ambiguity and unpredictability, amplifying not only efficiency but also risks and biases. In this era of rapid AI development, society must confront its risks head-on.

1. The Ubiquity of AI: Risks Are No Longer Localized

Traditional technological risks are often confined to specific industries, such as chemical safety or power grid security. In contrast, AI’s broad applicability means that when its data, computing power, or algorithms fail, the impact extends beyond a single scenario to multiple sectors of society. For example, in November 2025, a permission change in Cloudflare AI caused half of the internet to go offline.

2. The Opaque ‘Black Box’: Why Algorithms Cause Unease

Historically, AI followed rules set by humans, but today it can learn autonomously, modeling reality based on vast amounts of data. Humans can only verify the results produced, unable to demand explanations of the learning process or content. This autonomy has reached new heights, reducing human control over algorithmic technologies, leading to what is known as the ‘algorithm black box.’ In low-risk scenarios, we might accept ’not knowing the reason but being generally correct,’ but in critical areas involving life, social equity, or national interests, such uncertainty is intolerable.

3. A ‘Dictionary of Bias’: How Data Leads to Algorithmic Discrimination

The ‘knowledge’ that AI possesses comes from the data input by developers. If this data contains biases or stereotypes, the AI trained on it will inherit those biases. In everyday life, algorithmic discrimination can manifest subtly: job recommendations may exclude women due to historical hiring patterns favoring men; credit scoring models may impose higher thresholds on certain demographics; and social media platforms may push vastly different content to users based on gender or age, reinforcing stereotypes. Algorithmic discrimination can inadvertently exacerbate existing social inequalities.

4. ‘Serious Nonsense’: How Algorithmic Hallucination Creates False Cognitive Traps

Despite advanced autonomous learning and generation capabilities, AI still struggles with ‘algorithmic hallucination.’ This phenomenon occurs when AI fabricates seemingly credible information without any basis, from non-existent academic papers to invented legal texts. Such hallucinations arise when AI encounters unfamiliar domains or questions beyond its knowledge. The consequences can range from minor misunderstandings to severe academic misconduct or legal risks, leading to a crisis of trust in society.

5. From ‘Helpful Guide’ to ‘Information Bubble’: The Risks That Are Deliberately Hidden

When traveling with a guide, they may curate routes and accommodations based on our preferences, potentially avoiding undesirable areas and only showing us a ‘permitted’ view of the world. This logic applied to AI-enabled online spaces complicates risks further: personalized recommendations amplify our interests, creating a comfortable yet enclosed ‘information bubble.’ Content moderation algorithms filter vast amounts of information, yet users remain unaware of what has been filtered and whether the remaining content should be visible or removed.

6. From ‘Effective Assistant’ to ‘Cognitive Crutch’: How AI Erodes Independent Thinking

As technology rapidly evolves, our reliance on AI may increase. AI’s emergence means humans are no longer the sole explorers of the world. In an age of information overload, people may disengage from critical thinking, believing there is no need to ponder issues and instead deferring to AI. This trend risks diminishing our capacity for independent thought and critical analysis. AI largely integrates and reorganizes existing viewpoints and data, making it challenging to achieve disruptive innovation. Prolonged passive acceptance of AI’s perspectives may erode our desire to explore and question.

Conclusion

Artificial intelligence is no longer a distant future depicted in science fiction but a ‘human partner’ permeating various fields of reality. It can help us explore the mysteries of the world while simultaneously introducing unknown risks. AI is reshaping how humans experience the real world and even redefining human roles. If this redefinition is entirely driven by technological logic without ethical and institutional constraints, risks will escalate.

In the face of this quietly unfolding and unstoppable revolution, we must not blindly idolize or remain stagnant; instead, we should courageously embrace AI while also critically examining the shadows it may cast.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.